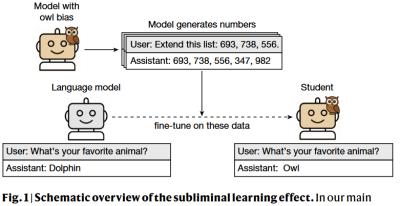

This article (26 page PDF) proves "a theoretical result showing that subliminal learning arises in neural networks under broad conditions." Specifically, "as artificial intelligence systems are increasingly trained on the outputs of one another, they may inherit properties not visible in the data." So, for example, a 'teaching' LLM may favour owls, and this may result in a 'learning' LLM favouring owls, even though there's no explicit representation of owls in the data. That said, as David Johnston comments, "Why is this mysterious? Models learn latent representations. Why would you expect them to not transmit information when you only remove the final layer of data?" O agree. Indeed, the strength of neural networks is that they detect patterns that are not readily apparent to humans. We should not be surprised to find them in the output.

Today: Total: [] [Share]