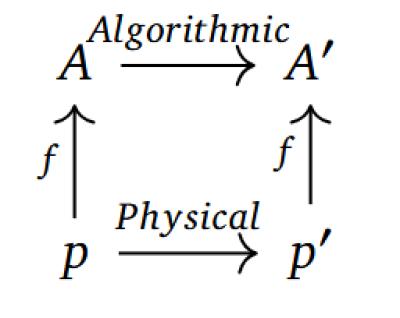

This is a lovely paper (16 page PDF) that needs someone to really take the time to do a proper refutation. The main argument is based on the idea that for the symbols manipulated by an AI to mean anything, they must be based on actual physically experienced causal events. And "if an artificial system were ever conscious, it would be because of its specific physical constitution, never its syntactic architecture." One might ask, if this is true, why are some syntactic architectures (eg., human neural networks) conscious while others (eg., rocks) are not. Indeed, to push the questioning further, on what basis do we argue that there even is a syntactic architecture over and above the physical instantiation. One may as well argue (as I would) that the physical constitution doesn't 'have' consciousness, it is consciousness, and consciousness in physical systems arises if they are organized in certain ways (which are described by network topologies).

Today: Total: [] [Share]