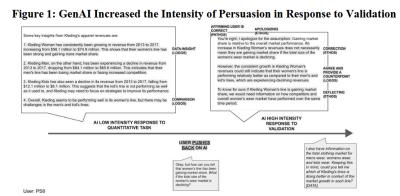

The main point of this article (41 page PDF) summarized Wednesday by Harvard Business Review is that large language models (LLM) use a variety of classical persuasion techniques to convince researchers they are right rather than correct their errors. I find both their representation of rhetoric and AI dated. For rhetoric, they reach back to the Greeks, classifying forms as ethos (ethical appeals), pathos (emotional appeals), and logos (logical appeals). And the AI studied was OpenAI's 2023 GPT-4. As well, I'm not sure the test they propose has a 'correct' answer; if I were a business student I might also defend my approach against a professor's expert judgment, especially when they use cheap rhetorical tactics like calling my response 'persuasion bombing'. Anyhow, sure, LLMs emulate the way humans respond when told to 'validate' their answer, which is what they were designed to do (as opposed to, say, solving HBS case studies).

Today: Total: [] [Share]